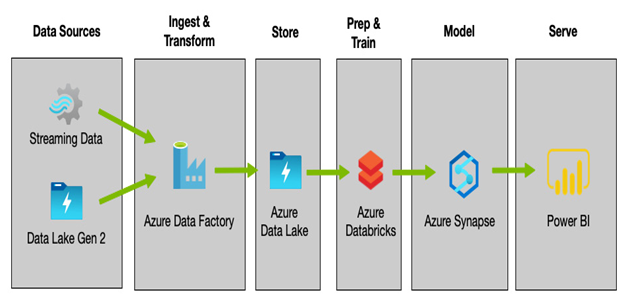

For many, a combination of tools may be the best option, and, depending on your exact requirements, you may use a combination of toolsets for different projects. As an all-encompassing example, however, the following diagram shows how all these products—and more—can be combined into a single solution:

Figure 13.9 – Example solution combining multiple technologies

As you can see, each service can work together as part of an overall data analytics solution, and although there are sometimes overlaps, combining multiple components gives the greatest flexibility.

Summary

This chapter looked at a growing capability in the cloud, especially in Azure—data integration and analytics.

Azure provides a range of tools for creating end-to-end data pipelines for storing, ingesting, transforming, aggregating, and analyzing data. So, we started the chapter with a high-level view of what a typical pipeline might look like.

We looked at how to configure Azure Storage to use ADLS Gen2, what extra capabilities this gives you, and how Azure Data Factory can create automated and secure pipelines for data loading and transformation.

Finally, we looked at the two primary tools for exploring and analyzing data with Azure: Azure Databricks and Azure Synapse Analytics.

After reading this chapter, you should have a better understanding of the different components that comprise a data analytics solution, including the strengths of each service and where one might be a better choice over another.

In the next chapter, we conclude Part 4, Applications and Databases, by looking at the different ways to architect solutions that enable automated resilience and redundancy.

Exam scenario

MegaCorp Inc. is building a new data analytics capability to help understand its marketing campaigns’ effectiveness and how they relate to product sales.

Marketing campaign data is exported daily and stored as flat CSV files. Sales data is exported overnight from the sales database into a normalized data warehouse database.

The management team would like data to be automatically imported and aggregated, and then modeled. It is expected that large amounts of data will be processed, and this needs to be performed relatively quickly. The data analytics teams are seasoned developers who are currently using the latest version of Spark.

Design an end-to-end solution that can accommodate the management team’s requirements.

Leave a Reply